Why (and how) we run A/B tests in digital finance: Real-world advice from a tech builder

Mastercard Strive ―

This post is by Lucinda Revell, co-founder of Boost Capital, an organization that enables financial service providers to serve customers via popular chat platforms without downloading another app.

This post is by Lucinda Revell, co-founder of Boost Capital, an organization that enables financial service providers to serve customers via popular chat platforms such as Facebook Messenger, Telegram, and WhatsApp, without downloading another app. Boost Capital has partnered with Mastercard Strive to support the digitalization of Filipino and Cambodian small businesses.

77% of companies with a digital presence run A/B tests, including Boost. We’re sharing our insights on how to best run A/B tests specifically for financial service providers. A/B testing is valuable, particularly for digital financial solutions, because it enables decision-making based on evidence, rather than assumptions or intuition.

Boost provides white-labled onboarding tech for financial service providers (FSPs) like banks and insurance companies so they can onboard customers, including small businesses, through digital channels. FSPs face dual, often conflicting, KPIs:

- A mandate to grow: FSPs with growth targets need to either find new customers or grow the usage of existing customers. These FSPs often specifically target small businesses because, unlike pure consumer lending, productive business loans are structured to help borrowers generate additional business profits, in turn increasing their ability to repay. Such productive loans are sustainable win-wins for the borrower and the FSP. The other segment FSPs often seek to provide for the currently underserved: individuals and enterprises that have historically been excluded from formal financial systems. Extending credit to this group isn’t just about opening up new markets; it’s also about fulfilling the industry’s larger promise of enabling progress without creating cycles of over-indebtedness. Again, good for the customer, and ultimately good for the bank.

- Minimizing “non-performers”: Lending to customers who will default on loans or activate and then leave dormant savings accounts weighs heavily on a financial institution’s balance sheet and operating expenses. With too many “non-performers”, costs — both operational and capital-related — quickly become unsustainable. Small businesses, many of which face challenges that lead to uneven cashflow or business closure, are particularly risky clients for FSPs to take on.

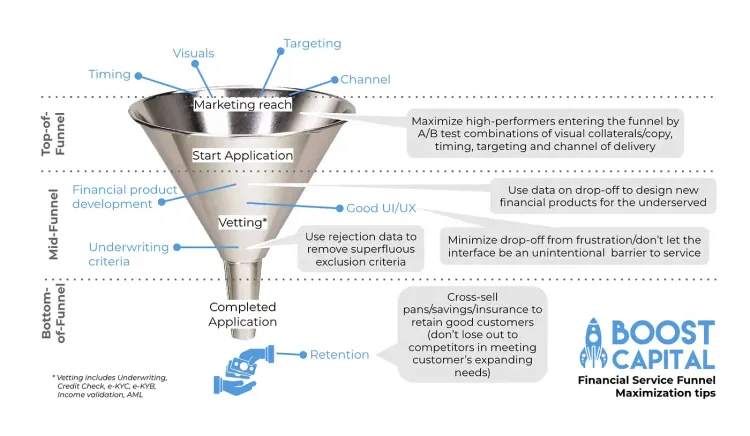

Thus, the difficult balance when growing as a FSP and increasing access to finance, especially for small businesses, is to serve the widest number of “good” customers, those who repay loans or actively use their deposit accounts. Widening “good” customer reach requires addressing this goal at multiple points in the customer funnel.

Great advice … but how? Examining our A/B tests on improving UI/UX for small businesses

As you can tell from our advice so far, we’re going to advise you to take a data-driven approach. And to facilitate this, we at Boost love A/B testing.

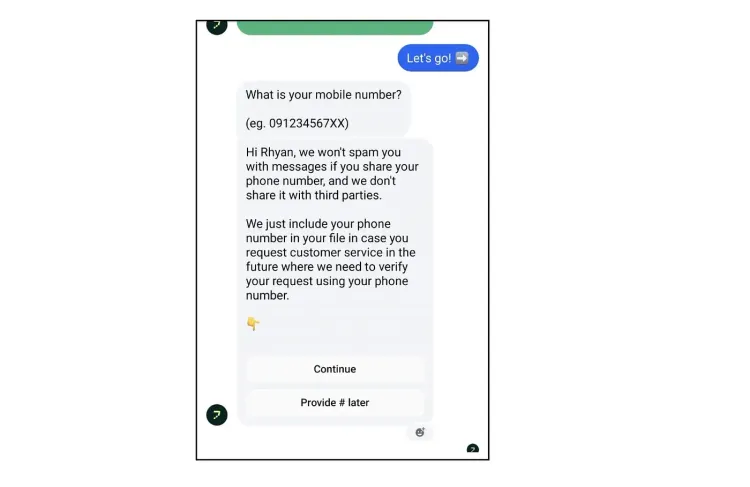

Boost’s tech covers the entire onboarding funnel. In the mid-funnel, we’ve A/B tested UI/UX to minimize what we internally call “silly drop-off,” the lowest-hanging fruit of funnel optimization. At this point, good customers are lost simply because the UI/UX of the onboarding channel drives them nuts. We created a chat-based onboarding flow that asked for a merchant’s phone number very early on, within the first few questions. This way, if an applicant dropped off, we could use the phone number as part of a customer identifier system. But 32% of merchants stopped without providing a phone number.

First, we tried nudging those applicants who didn’t give a phone number with a message reassuring them that they would not receive spam. The nudge was sent 15 minutes after the first request for a phone number went unanswered.

This nudge worked a bit. In the control group (people who dropped off but didn’t receive the nudge), 24.3% eventually returned (our standard flow included a generic nudge sent at the three-hour mark) to provide a phone number. 26.5% of the group that did receive the nudge returned to provide a phone number.

But we thought, surely we can do better. What if we ask for the phone number later in the process? Would this reduce merchant drop off even more?

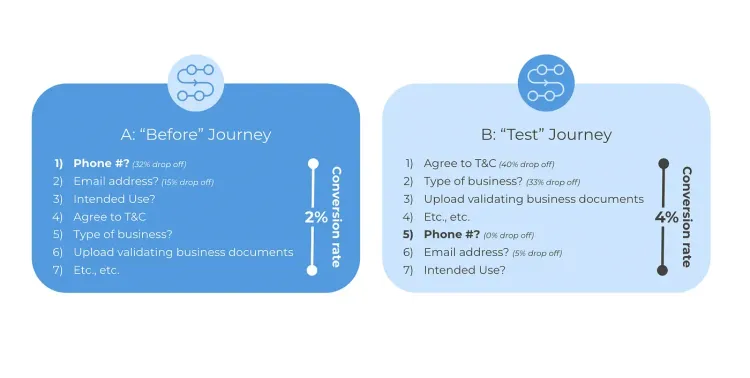

We conducted another A/B test in which we waited to ask for the phone number until after asking for information about their business (i.e., later in the application process, not up front). We hypothesized that merchants would be more likely to provide business information first, as compared to their personal information, because they think we might spam them with SMS or call them when they are not yet committed. We ran this A/B test over two weeks.

The result? Overall, conversion for the B “test” journey (where we asked for the phone number later) was double the A “before” journey (where we asked for the phone number early). The merchant providing business information first, then phone number later, is on average far more likely to complete their digital application.

The downside? We collected far fewer phone numbers overall: 19% of merchants who started a B method application provided their phone number, whereas 68% of merchants who started an A method application provided their phone number. But the intent was never to collect phone numbers, but rather to onboard merchants for the FSP by gathering a complete application. Doubling the number who completed their application is a huge win!

Sounds great! How can I implement A/B testing too?

Conducting A/B testing is far easier on digital onboarding channels than in physical bank branches. You don’t have to train a bunch of customer-facing staff on a new process and ask them to monitor results, as well as monitoring a control group using an established process. You can run A/B tests in native apps, but it can be time consuming to deploy different versions, both in terms of developer time to code, and pushing out the new versions to users. Chat is a great way to run A/B tests, because chat is very low-barrier-to-entry (everybody’s already on chat, they don’t have to download a new app to talk with your chatbot). You can run a lot of chatters through your experiment quickly and thus innovate and iterate rapidly.

There are many low- or no-code platforms for creating chatbots. Using a randomizer, you can direct applicants to either the A process or the B process. This process can also be done in a native app, though it might take a bit more effort to program.

Next, connect your chatbot/native app/web app to an analytics monitoring and visualization system. This allows you to run event-based analytics, track user behavior on cohort levels, and create visualization to draw customer journey insights. We use Amplitude, but there are many options, including MixPanel and others, depending on which channel you’re creating data in . If you only expect to be analyzing low volumes of data, you can just analyze your raw data in a spreadsheet, for simplicity. The important thing is to have a system that allows you to understand your data and draw conclusions.

With the A/B test results clearly visualized using an event-based analytics program, you can draw clear conclusions to decide next steps: continue the experiment to expand your test sample size, try something different because your hypothesis was wrong, or enjoy your hypothesis success and deploy the new method across your whole funnel.

At Boost, we’ve integrated the tech systems to nimbly manage these A/B tests, but that’s something that any decent end-customer-interfacing tech solution provider should be doing. The really important skill is providing innovative ideas of what to test! What assumptions should we challenge? How can we not just react nimbly to customer needs but also innovate ahead of customer expectations to provide an onboarding experience better than customers even currently know to demand? That’s the real “secret sauce” of awesome A/B testing.